How to Make Your Photos Searchable by What's Inside Them

# How to Make Your Photos Searchable by What's Inside Them: A Complete Guide to Visual Content Discovery

In an era where billions of photos are captured and stored daily, the ability to efficiently locate specific images based on their visual content has become a critical necessity for photographers, content creators, businesses, and everyday users alike. Making your photos searchable by what's inside them represents a fundamental shift from traditional file naming and folder organization to sophisticated artificial intelligence-powered recognition systems that can identify objects, people, scenes, text, and even emotions within images. This comprehensive transformation leverages cutting-edge machine learning algorithms, computer vision technology, and metadata optimization to create a seamless bridge between human visual perception and digital searchability. Whether you're managing a personal photo library of thousands of family memories, organizing professional photography portfolios, or maintaining extensive commercial image databases, understanding how to implement effective visual search capabilities can dramatically improve your workflow efficiency, content discoverability, and overall digital asset management. The journey toward making photos truly searchable involves multiple interconnected strategies, from choosing the right platforms and tools to optimizing metadata, implementing AI-powered tagging systems, and understanding the underlying technologies that power modern visual search engines.

1. Understanding Visual Recognition Technology and Its Core Components

Visual recognition technology forms the backbone of modern photo searchability, utilizing sophisticated neural networks and deep learning algorithms to analyze and interpret the contents of digital images with remarkable accuracy. At its core, this technology employs convolutional neural networks (CNNs) that have been trained on millions of labeled images to recognize patterns, shapes, colors, textures, and contextual relationships within photographs. These systems can identify and categorize thousands of different objects, from common items like cars, animals, and food to more complex concepts such as architectural styles, weather conditions, and human activities. The technology operates through multiple layers of analysis, beginning with low-level feature detection that identifies edges, corners, and basic shapes, progressing to mid-level pattern recognition that combines these features into meaningful objects, and culminating in high-level semantic understanding that interprets the overall context and relationships within the image. Modern visual recognition systems also incorporate facial recognition capabilities, optical character recognition (OCR) for text within images, scene classification for identifying locations and environments, and even emotion detection for analyzing facial expressions and body language. Understanding these technological foundations is crucial for effectively implementing visual search solutions, as it helps users make informed decisions about which platforms and tools will best serve their specific needs while also providing insight into the limitations and capabilities of current visual recognition systems.

2. Choosing the Right Platform - Cloud Services vs. Local Solutions

Selecting the appropriate platform for making your photos searchable represents a critical decision that will significantly impact your workflow, privacy, cost structure, and overall user experience. Cloud-based solutions such as Google Photos, Amazon Photos, Apple iCloud Photos, and Microsoft OneDrive offer powerful visual recognition capabilities with minimal setup requirements, automatically analyzing uploaded images and generating searchable tags without requiring technical expertise from users. These platforms leverage massive computing resources and continuously updated AI models to provide highly accurate object recognition, face detection, and scene classification, while also offering the convenience of cross-device synchronization and collaborative sharing features. However, cloud solutions come with considerations regarding privacy, data ownership, ongoing subscription costs, and dependency on internet connectivity for full functionality. Alternatively, local solutions such as Adobe Lightroom Classic, Excire Search, or open-source options like digiKam provide greater control over data privacy and processing, allowing users to maintain complete ownership of their images while still benefiting from advanced visual search capabilities. Local solutions often offer more customizable tagging systems, integration with existing workflows, and the ability to work offline, but typically require more technical knowledge, higher upfront costs, and ongoing maintenance. Hybrid approaches that combine local processing with selective cloud integration are increasingly popular, offering users the flexibility to maintain sensitive images locally while leveraging cloud capabilities for broader collections, creating a balanced solution that addresses both privacy concerns and functionality requirements.

3. Optimizing Image Metadata and EXIF Data for Enhanced Searchability

Effective metadata optimization serves as the foundation for comprehensive photo searchability, extending far beyond basic filename conventions to encompass detailed EXIF data, custom keywords, descriptive captions, and structured tagging systems that enhance both human readability and machine interpretation. EXIF (Exchangeable Image File Format) data automatically captured by cameras includes valuable information such as shooting date and time, camera settings, GPS coordinates, lens specifications, and technical parameters that can significantly improve search functionality when properly leveraged. By ensuring that camera clocks are accurately set, GPS functionality is enabled when appropriate, and custom metadata fields are utilized, photographers can create rich information layers that support both automated and manual search processes. Advanced metadata optimization involves implementing consistent keyword hierarchies, utilizing standardized vocabulary for object and scene descriptions, and incorporating relevant contextual information such as event names, location details, and participant information. Professional photographers and content managers often employ controlled vocabularies or taxonomies that ensure consistency across large image collections, while also implementing batch processing tools to efficiently apply metadata to multiple images simultaneously. Additionally, understanding how different platforms interpret and utilize metadata allows users to optimize their tagging strategies for specific search engines and visual recognition systems, ensuring maximum discoverability across various platforms and applications while maintaining compatibility with industry-standard metadata formats and protocols.

4. Implementing AI-Powered Auto-Tagging Systems

AI-powered auto-tagging systems represent the cutting edge of automated photo organization, utilizing sophisticated machine learning algorithms to analyze image content and automatically generate relevant tags, descriptions, and categorical assignments without manual intervention. These systems employ multiple AI technologies simultaneously, including computer vision for object and scene recognition, natural language processing for generating descriptive text, and deep learning models trained on vast datasets to understand contextual relationships and semantic meaning within images. Modern auto-tagging solutions can identify hundreds of different objects, animals, activities, and concepts with impressive accuracy, while also recognizing more nuanced elements such as artistic styles, emotional tones, and compositional techniques. Implementation of these systems typically involves selecting appropriate AI services such as Google Cloud Vision API, Amazon Rekognition, Microsoft Computer Vision, or specialized photography platforms like Adobe Sensei, each offering unique strengths and capabilities tailored to different use cases and requirements. Successful auto-tagging implementation requires understanding the balance between automation and human oversight, as AI systems may occasionally generate incorrect or irrelevant tags that require manual correction or refinement. Advanced users often implement confidence thresholds that automatically accept high-confidence tags while flagging uncertain classifications for human review, creating efficient workflows that combine the speed of automation with the accuracy of human judgment. Additionally, many systems allow for custom model training, enabling users to teach AI systems to recognize specific objects, brands, or concepts relevant to their particular domain or industry.

5. Manual Tagging Strategies and Best Practices

While automated systems provide excellent baseline functionality, strategic manual tagging remains essential for achieving optimal photo searchability, particularly for specialized content, artistic images, or collections requiring precise categorization and nuanced description. Effective manual tagging strategies begin with establishing consistent vocabulary and hierarchical structures that reflect natural search patterns and user behavior, ensuring that tags are both comprehensive and intuitive for future retrieval. Professional photographers and digital asset managers often develop standardized tagging protocols that include multiple levels of specificity, from broad categorical tags like "landscape" or "portrait" to highly specific descriptors such as "golden hour mountain reflection" or "candid wedding ceremony moment." The most effective manual tagging approaches incorporate multiple perspectives and search angles, considering how different users might naturally search for the same content, including synonyms, alternative descriptions, and contextual variations that broaden discoverability. Successful manual tagging also involves understanding the difference between descriptive tags that identify what is literally present in the image and conceptual tags that capture the mood, purpose, or intended use of the photograph. Batch tagging techniques can significantly improve efficiency when working with large collections, allowing users to apply common tags to multiple related images while still maintaining the flexibility to add unique descriptors to individual photos. Additionally, implementing regular tag auditing and refinement processes helps maintain consistency and accuracy over time, ensuring that tagging systems remain effective and relevant as collections grow and evolve.

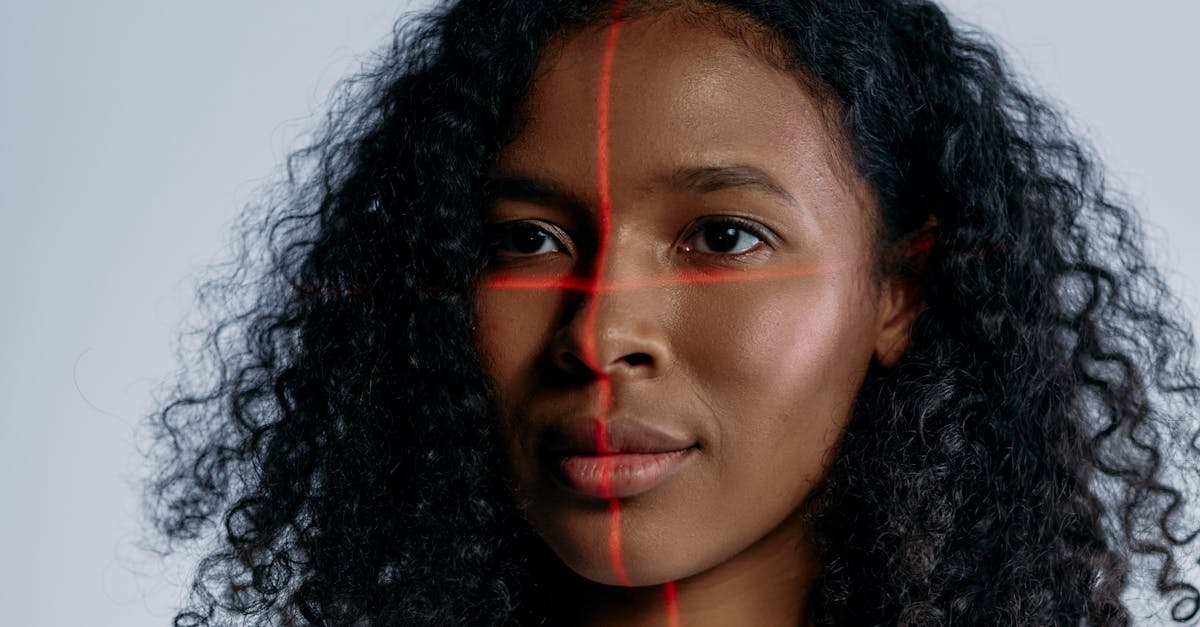

6. Leveraging Facial Recognition for People-Based Search

Facial recognition technology has revolutionized the way we organize and search through photo collections, enabling users to instantly locate images containing specific individuals across vast digital archives with remarkable speed and accuracy. Modern facial recognition systems utilize advanced biometric analysis to identify unique facial features, creating mathematical representations that remain consistent across different lighting conditions, angles, and expressions, while also accounting for changes in appearance over time such as aging, hairstyle modifications, or facial hair variations. Implementation of facial recognition for photo search typically involves an initial setup phase where users identify and label faces in a representative sample of images, allowing the system to learn and recognize these individuals automatically in future photos. Privacy considerations are paramount when implementing facial recognition systems, requiring careful attention to data security, user consent, and compliance with relevant regulations such as GDPR or CCPA, particularly when dealing with images of minors or in commercial contexts. Advanced facial recognition platforms offer sophisticated features such as family grouping based on facial similarities, age progression tracking that maintains recognition accuracy over time, and even emotion detection that can identify expressions and moods for more nuanced search capabilities. Professional photographers working with events, portraits, or documentary photography often find facial recognition invaluable for client delivery and portfolio management, enabling rapid identification of specific individuals across hundreds or thousands of images. However, successful implementation requires understanding the limitations of facial recognition technology, including potential accuracy issues with certain demographic groups, challenges with partial face visibility, and the need for ongoing system training and refinement to maintain optimal performance.

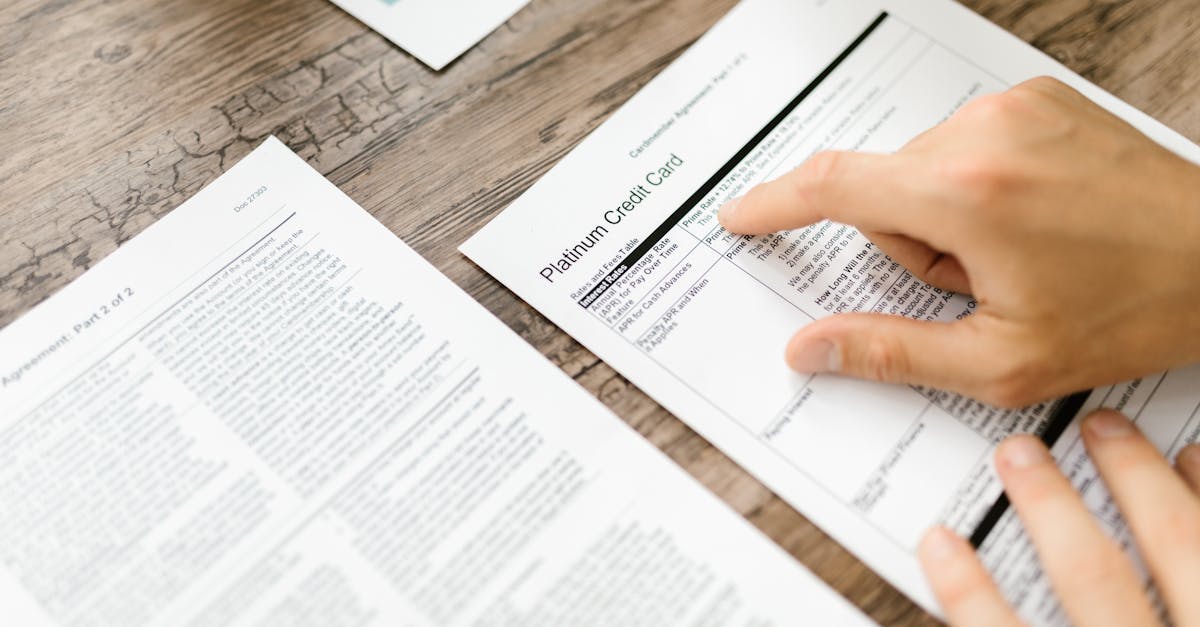

7. Text Recognition and OCR Integration for Document Photos

Optical Character Recognition (OCR) technology has become an indispensable tool for making document photos, screenshots, and images containing text fully searchable, transforming static visual content into dynamic, searchable digital assets. Modern OCR systems can accurately recognize text in multiple languages, fonts, and formats, including handwritten content, printed documents, street signs, product labels, and digital displays captured in photographs. The integration of OCR capabilities into photo management systems enables users to search for specific words, phrases, or numbers that appear within images, dramatically expanding search functionality beyond visual elements to include textual content. Advanced OCR implementations utilize machine learning algorithms that can handle challenging conditions such as poor lighting, skewed perspectives, complex backgrounds, and partially obscured text, while also providing confidence scores that indicate the reliability of text recognition results. Professional applications of OCR in photo search include legal document management, research archives, business card organization, receipt tracking, and academic research where textual content within images needs to be quickly located and referenced. The technology is particularly valuable for photographers and content creators who work with signage, documents, or any images containing important textual information that may need to be retrieved later. Implementation considerations include understanding the limitations of OCR accuracy, particularly with stylized fonts, handwriting, or degraded image quality, and the importance of manual verification for critical applications. Many modern platforms offer real-time OCR processing that automatically extracts and indexes text content as images are uploaded, creating seamless integration between visual and textual search capabilities that significantly enhances overall photo discoverability and utility.

8. Geographic and Location-Based Photo Organization

Geographic and location-based photo organization leverages GPS metadata, reverse geocoding, and mapping technologies to create powerful spatial search capabilities that enable users to find images based on where they were captured, providing intuitive navigation through photo collections organized by geographic context. Modern smartphones and GPS-enabled cameras automatically embed precise location coordinates in image metadata, which can then be interpreted by photo management systems to display images on interactive maps, group photos by geographic proximity, and enable location-based search queries. Advanced geographic organization systems utilize reverse geocoding to translate GPS coordinates into human-readable location names, including specific addresses, landmarks, cities, regions, and countries, making it possible to search for photos using natural language queries like "photos from Paris" or "images near Golden Gate Bridge." The implementation of geographic search capabilities often includes hierarchical location organization that allows users to browse photos at multiple zoom levels, from continent and country views down to specific streets or buildings, providing flexible navigation options that accommodate different search preferences and use cases. Professional photographers and travel enthusiasts particularly benefit from robust geographic organization, as it enables efficient portfolio management, client delivery based on shooting locations, and the creation of location-based galleries or travel documentation. Privacy considerations are crucial when implementing location-based features, as GPS metadata can reveal sensitive information about personal movements and locations, requiring careful attention to sharing settings and metadata stripping when images are distributed publicly. Advanced geographic systems also incorporate temporal elements, enabling users to view how locations change over time or to find images captured during specific visits to particular places, creating rich contextual search capabilities that combine spatial and temporal dimensions for comprehensive photo discovery.

9. Advanced Search Techniques and Query Optimization

Mastering advanced search techniques and query optimization strategies enables users to unlock the full potential of visual search systems, utilizing sophisticated filtering, boolean logic, and multi-parameter queries to locate specific images with precision and efficiency. Advanced search interfaces typically offer multiple search modalities that can be combined for maximum effectiveness, including keyword searches, visual similarity matching, metadata filtering, date range specifications, and technical parameter constraints such as camera settings or file formats. Boolean search operators such as AND, OR, and NOT allow users to create complex queries that combine multiple criteria, enabling searches like "sunset AND beach NOT people" or "wedding OR engagement AND outdoor," providing precise control over search results. Visual similarity search represents a particularly powerful advanced technique, allowing users to find images that share visual characteristics with a reference photo, utilizing AI algorithms to identify similar compositions, color palettes, subjects, or artistic styles across large collections. Advanced users often employ nested search strategies that progressively refine results through multiple filtering stages, beginning with broad categorical searches and gradually applying more specific criteria to narrow down results to the most relevant images. Query optimization also involves understanding how different search systems interpret and weight various metadata elements, enabling users to structure their searches for maximum effectiveness on specific platforms or applications. Professional photographers and digital asset managers frequently utilize saved search queries and smart collections that automatically update as new images are added, creating dynamic organizational systems that maintain relevance over time. Additionally, understanding search analytics and result patterns helps users refine their tagging and metadata strategies, creating feedback loops that continuously improve both search effectiveness and overall collection organization.

10. Future Trends and Emerging Technologies in Visual Search

The future of visual search technology promises revolutionary advances that will fundamentally transform how we interact with and discover visual content, driven by breakthrough developments in artificial intelligence, augmented reality, and multimodal search capabilities. Emerging AI technologies such as transformer models and attention mechanisms are enabling more sophisticated understanding of visual context and relationships, allowing search systems to interpret complex scenes, understand narrative elements, and even predict user intent based on search patterns and behavior. Augmented reality integration is beginning to enable real-time visual search capabilities where users can point their cameras at objects or scenes to instantly find related images in their collections or discover similar content across the web, creating seamless bridges between physical and digital visual experiences. Multimodal search represents another frontier, combining visual, textual, and audio elements to create comprehensive search experiences that can interpret spoken queries, analyze video content, and understand complex relationships between different types of media. Advanced neural architectures are also enabling more nuanced understanding of artistic and aesthetic elements, allowing search systems to identify style, mood, composition techniques, and even cultural or historical context within images. The integration of blockchain technology and decentralized systems is beginning to address privacy and ownership concerns while enabling new models for visual content sharing and discovery that maintain user control over personal data. Edge computing developments are making sophisticated visual recognition capabilities available on local devices without requiring cloud connectivity, addressing privacy concerns while enabling real-time processing and immediate search results. As these technologies mature and converge, we can expect visual search to become increasingly intuitive, powerful, and integrated into our daily digital experiences, ultimately making the vast universe of visual content more accessible and discoverable than ever before.