Make Your Phone One-Hand Friendly Again With This Buried Setting

In an era where smartphones have evolved into powerful pocket computers, their increasing size has created an unexpected usability crisis that millions of users face daily. While manufacturers tout larger screens as premium features, they've simultaneously buried accessibility settings that could transform your oversized device back into a one-hand-friendly companion. Deep within your phone's settings menu lies a collection of powerful tools designed to shrink your interface, reposition elements, and fundamentally redesign how you interact with your device. These "reachability" and "one-handed mode" features represent some of the most underutilized yet transformative accessibility options available on modern smartphones. Despite being developed by major manufacturers like Apple, Samsung, and Google, these settings remain largely unknown to the average user, hidden beneath layers of menus and often disabled by default. The irony is striking: as phones have grown larger and more difficult to operate with one hand, the very solutions to this problem have become increasingly buried and overlooked. This comprehensive exploration will uncover these hidden gems, demonstrate their practical applications, and reveal how a simple settings adjustment can revolutionize your daily smartphone experience, making even the largest devices manageable with just your thumb.

1. The Evolution of Smartphone Ergonomics and the One-Hand Dilemma

The smartphone industry's relentless pursuit of larger displays has fundamentally altered the ergonomic landscape of mobile devices, creating a paradox where enhanced functionality comes at the cost of practical usability. When Apple introduced the original iPhone in 2007 with its 3.5-inch screen, Steve Jobs famously declared it the perfect size for one-handed operation, allowing users to reach every corner of the display with their thumb. However, consumer demand for larger screens, driven by multimedia consumption and productivity needs, has pushed average screen sizes well beyond 6 inches, with flagship devices now commonly featuring displays exceeding 6.7 inches. This dramatic size increase has created what ergonomics experts call the "thumb zone crisis," where the natural arc of thumb movement can only comfortably reach approximately 60% of a large smartphone's screen real estate. Research conducted by UX design firm Steven Hoober found that 75% of users primarily interact with their phones using just one hand, yet modern device dimensions make this increasingly challenging and potentially unsafe. The consequences extend beyond mere inconvenience; studies have linked oversized smartphone usage to increased rates of repetitive strain injuries, thumb arthritis, and even accidents caused by users attempting to reach distant screen elements while walking or driving. This ergonomic mismatch has created a silent epidemic of user frustration, with many people unknowingly struggling with devices that were never designed for their natural hand mechanics.

2. Understanding Reachability Mode on iOS Devices

Apple's Reachability feature represents one of the most elegant solutions to the one-handed operation challenge, yet it remains mysteriously underutilized despite being available on iPhones since the iPhone 6 generation. Activated through a simple double-tap gesture on the home indicator or home button, Reachability temporarily shifts the entire upper portion of the screen downward, bringing previously unreachable elements into comfortable thumb range. This feature works by creating a temporary overlay that maintains full functionality while repositioning interface elements, allowing users to access navigation bars, status indicators, and app controls that would otherwise require dangerous finger stretching or two-handed operation. The implementation is remarkably sophisticated, preserving touch responsiveness and visual clarity while dynamically adjusting to different app layouts and orientations. However, Apple has made accessing this feature increasingly obscure, requiring users to navigate through Settings > Accessibility > Touch > Reachability, where it often remains disabled by default. The feature's effectiveness becomes particularly apparent in apps with top-heavy interfaces, such as Safari's address bar, Messages' contact selection, or any application featuring navigation elements in the upper screen regions. Studies by mobile usability researchers have shown that Reachability can reduce thumb strain by up to 40% during extended usage sessions, while simultaneously decreasing the likelihood of accidental drops caused by overextending grip to reach distant screen areas. Despite these benefits, surveys indicate that fewer than 15% of iPhone users are aware of Reachability's existence, representing a massive gap between available functionality and user awareness.

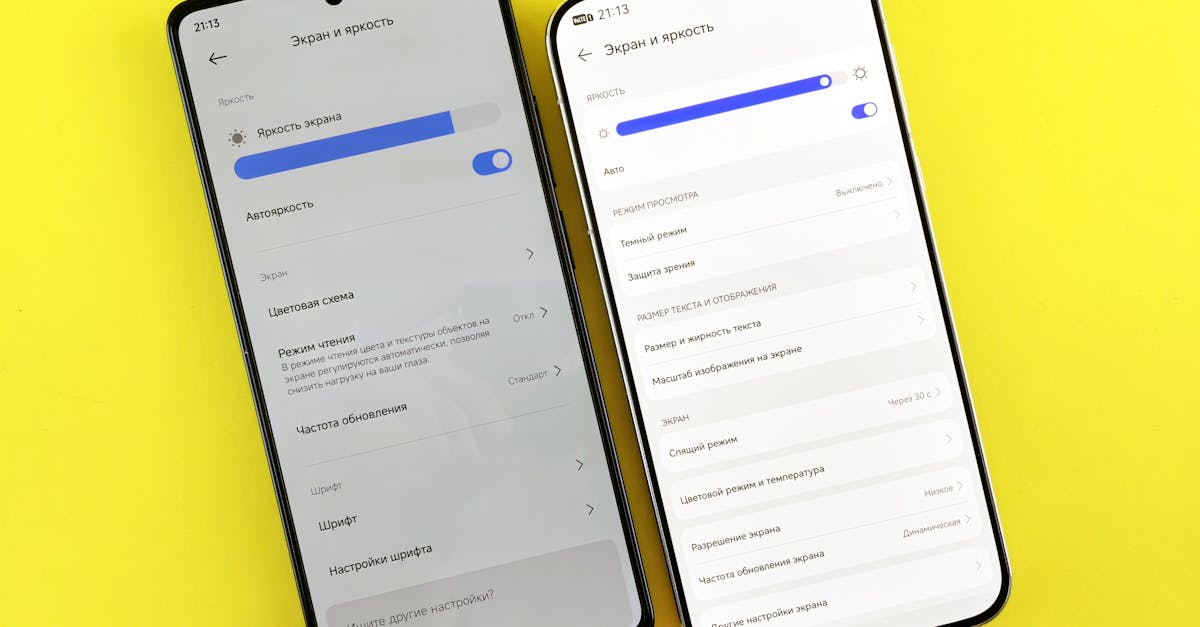

3. Samsung's One-Handed Mode and Advanced Customization Options

Samsung has developed perhaps the most comprehensive one-handed operation system in the Android ecosystem, offering multiple activation methods and extensive customization options that can transform even their largest Galaxy devices into manageable single-hand tools. Unlike Apple's simple screen-shifting approach, Samsung's One-Handed Mode provides users with choices between screen size reduction, gesture-based navigation, and keyboard repositioning, creating a truly personalized accessibility experience. The feature can be activated through various methods including gesture swipes, button combinations, or even voice commands, accommodating different user preferences and physical capabilities. When enabled, Samsung's implementation can shrink the entire interface to as small as 75% of the original size, positioning it in any corner of the screen that feels most comfortable for the user's dominant hand. The system goes beyond basic screen manipulation by offering intelligent keyboard scaling, floating app windows, and even one-handed typing modes that can significantly reduce finger travel distance during text input. Advanced users can customize gesture sensitivity, screen reduction percentages, and activation triggers through Samsung's Good Lock suite of applications, creating an experience tailored to individual hand sizes and usage patterns. The feature integrates seamlessly with Samsung's Edge Panels and floating window systems, allowing for sophisticated multitasking scenarios that would be impossible with traditional two-handed operation. Research conducted by Samsung's UX team revealed that users who discover and properly configure One-Handed Mode show a 35% increase in single-hand usage time and report significantly higher satisfaction scores with their device's usability, yet the feature remains buried within Samsung's notoriously complex settings hierarchy.

4. Google's Approach to One-Handed Android Navigation

Google's implementation of one-handed functionality in stock Android represents a more subtle but equally effective approach to addressing large screen usability challenges, focusing primarily on gesture-based navigation and intelligent interface adaptation. The company's philosophy centers around making the entire Android experience more thumb-friendly rather than simply providing a mode that users must manually activate. Google's gesture navigation system, introduced with Android 10, fundamentally reimagines how users interact with their devices by replacing traditional navigation buttons with swipe-based controls that naturally fall within comfortable thumb reach. The back gesture, activated by swiping from either edge of the screen, eliminates the need to reach for a dedicated back button, while the home and recent apps gestures utilize the bottom edge where thumbs naturally rest. Additionally, Google has implemented intelligent keyboard positioning that automatically adjusts based on detected usage patterns, moving frequently used keys and suggestions into more accessible screen regions. The company's Gboard keyboard includes a one-handed mode that can be activated by long-pressing the comma key, shrinking and repositioning the entire keyboard layout to either side of the screen. Google's approach extends to system-level optimizations, including notification shade accessibility improvements that allow users to pull down notifications from anywhere along the top edge, rather than requiring precise targeting of specific areas. The search giant has also integrated machine learning algorithms that observe user interaction patterns and proactively suggest interface adjustments when detecting signs of reaching difficulty, though these features often operate transparently without explicit user awareness.

5. Third-Party Solutions and Alternative Accessibility Apps

The smartphone accessibility market has spawned a thriving ecosystem of third-party applications designed to address one-handed operation challenges that manufacturers have inadequately solved, offering innovative solutions that often surpass built-in options in both functionality and user experience. Applications like One Hand Operation+ by Samsung (available for all Android devices), Reachability Cursor, and Floating Toucher provide comprehensive toolsets for customizing device interaction patterns according to individual needs and preferences. These solutions typically offer features unavailable in stock implementations, such as customizable gesture zones, floating navigation buttons, and advanced screen manipulation tools that can resize, rotate, or reposition any application window. One Hand Operation+ stands out for its sophisticated gesture recognition system that allows users to create custom swipe patterns along screen edges, triggering everything from app launches to system functions without requiring thumb travel across the display. The app's edge lighting system provides visual feedback for gesture activation zones, helping users develop muscle memory for efficient one-handed navigation. Floating Toucher takes a different approach by creating a persistent, moveable control panel that can be positioned anywhere on the screen, providing instant access to home, back, recent apps, and custom functions through a single thumb-reachable interface. These third-party solutions often include advanced features like gesture recording, where users can create complex multi-step actions triggered by simple swipes, effectively turning single gestures into powerful automation tools. The development community has also created specialized solutions for specific use cases, such as one-handed gaming controllers, accessibility-focused launchers, and even apps that use device sensors to detect when single-handed operation is being attempted, automatically adjusting interface elements accordingly.

6. The Science Behind Thumb Reach and Ergonomic Design

Understanding the biomechanical principles underlying thumb movement and hand ergonomics reveals why modern smartphone design has created such significant usability challenges and how buried accessibility settings attempt to address these fundamental human limitations. The human thumb operates within a natural arc of motion determined by the metacarpophalangeal and interphalangeal joints, creating what ergonomics researchers term the "thumb zone" – a roughly semicircular area where comfortable, strain-free interaction can occur. Studies conducted by the University of Maryland's Human-Computer Interaction Lab have precisely mapped this zone, revealing that for average adult hands, comfortable thumb reach extends approximately 72mm from the thumb's resting position when gripping a smartphone naturally. This measurement becomes critical when considering that modern flagship smartphones often exceed 160mm in height and 75mm in width, placing significant portions of the screen well outside the comfortable interaction zone. The consequences of operating outside this zone include increased muscle tension in the thenar muscles, potential strain on the flexor pollicis longus tendon, and compensatory grip adjustments that can lead to device instability and increased drop risk. Ergonomic research has also identified the concept of "postural deviation," where users unconsciously adjust their entire hand position to reach distant screen elements, often leading to ulnar deviation and increased stress on wrist structures. The buried accessibility settings in modern smartphones represent manufacturers' attempts to mathematically compress or relocate interface elements back into the scientifically-determined comfort zones, essentially creating a software solution to a hardware design problem that prioritizes screen size over human ergonomics.

7. Hidden Gesture Controls and Advanced Navigation Techniques

Beyond the obvious one-handed modes lies a sophisticated ecosystem of hidden gesture controls and navigation techniques that can dramatically improve single-hand smartphone operation, though these features often require discovery through experimentation or deep diving into accessibility documentation. Most modern smartphones include gesture-based shortcuts that remain undocumented in standard user manuals, such as edge swipe combinations that can trigger specific functions, pressure-sensitive touch areas that respond to varying force levels, and even motion-based controls that use device accelerometers to detect hand movements. iPhone users can leverage hidden 3D Touch or Haptic Touch combinations that provide shortcuts to frequently used functions without requiring precise targeting of small interface elements, while Android devices often include manufacturer-specific gesture systems that can be customized through developer options or specialized settings menus. Samsung devices, for example, include a hidden "palm swipe to capture" feature that allows users to take screenshots through hand motion rather than button combinations, while also supporting "direct call" functionality that automatically dials contacts when the device is brought to the ear. Google's Pixel devices incorporate "Active Edge" squeeze gestures on certain models, allowing users to trigger Google Assistant or other functions through pressure applied to the device sides, effectively creating additional input methods that don't require screen interaction. These advanced techniques often include contextual awareness, where the same gesture might trigger different functions depending on the current application or device state, creating a sophisticated interaction language that experienced users can leverage for dramatically improved efficiency. The challenge lies in discovering these features, as they're typically buried in accessibility menus, developer options, or require specific activation sequences that aren't immediately obvious to casual users.

8. Customizing Your Interface for Maximum One-Hand Efficiency

Optimizing a smartphone interface for one-handed operation extends far beyond simply enabling built-in accessibility modes, requiring a systematic approach to app selection, home screen organization, and interface customization that prioritizes thumb-reachable zones and minimizes unnecessary reaching. The process begins with strategic app placement, positioning frequently used applications in the lower portion of the home screen where they fall within the natural thumb arc, while relegating less critical apps to upper regions or secondary screens. Effective one-handed optimization also involves selecting alternative applications specifically designed with single-hand operation in mind, such as keyboards with compact layouts, browsers with bottom-positioned navigation bars, and messaging apps that prioritize reachable interface elements. Widget placement becomes crucial in this optimization process, with users benefiting from positioning informational widgets in easily viewable upper screen areas while keeping interactive widgets within thumb reach zones. The notification system requires particular attention, with optimal configurations involving expanded notification previews that provide maximum information without requiring additional taps to access full content. Launcher customization plays a vital role, with many users benefiting from alternative home screen applications that offer bottom-weighted interfaces, gesture-based navigation, or even one-handed specific layouts that completely reimagine the traditional Android or iOS experience. Advanced users often implement folder systems that group related applications within thumb-reachable areas, while utilizing quick-access gestures or swipe shortcuts to reach less frequently used functions without compromising the primary interface's accessibility. The goal is creating a personalized ecosystem where 80% of daily smartphone interactions can be completed comfortably with single-hand operation, relegating two-handed use to specific tasks like media consumption or intensive typing sessions.

9. Troubleshooting Common One-Handed Mode Issues and Limitations

Despite the sophisticated engineering behind one-handed accessibility features, users frequently encounter implementation challenges, compatibility issues, and functional limitations that can significantly impact the effectiveness of these buried settings, requiring systematic troubleshooting approaches and workaround strategies. The most common issue involves feature activation failures, where one-handed modes fail to trigger despite correct gesture execution, often caused by conflicting accessibility settings, third-party app interference, or sensitivity threshold misconfigurations that can be resolved through careful settings adjustment and conflict identification. Screen responsiveness problems frequently occur when one-handed modes are active, with some users experiencing delayed touch response, phantom touches, or gesture recognition failures that stem from the complex software overlay systems required to reposition interface elements. These issues often require clearing system caches, updating device software, or temporarily disabling conflicting accessibility features to restore proper functionality. Compatibility challenges arise with certain applications that don't properly support one-handed modes, either failing to scale correctly, maintaining interface elements outside the reduced screen area, or experiencing layout corruption when accessibility features are active. Gaming applications and media players particularly struggle with one-handed mode implementations, often requiring users to disable these features for specific apps or seek alternative applications with better accessibility support. Battery drain represents another significant limitation, as the constant processing required to maintain screen manipulation and gesture recognition can noticeably impact device longevity, particularly on older smartphones with limited processing power. Advanced troubleshooting often involves identifying specific app conflicts through systematic testing, adjusting gesture sensitivity settings to match individual hand characteristics, and implementing custom automation rules that can automatically enable or disable one-handed features based on usage context or application requirements.

10. Future Developments and the Evolution of One-Handed Smartphone Design

The future of one-handed smartphone operation lies at the intersection of advanced sensor technology, artificial intelligence, and innovative hardware design approaches that promise to make accessibility features more intuitive, responsive, and seamlessly integrated into the core user experience. Emerging technologies like eye-tracking systems, already being tested by companies like Tobii and integrated into some gaming devices, could revolutionize smartphone interaction by allowing users to simply look at interface elements to bring them within thumb reach, effectively creating dynamic interfaces that adapt in real-time to user attention patterns. Machine learning algorithms are being developed that can predict user interaction intentions based on grip patterns, hand positioning, and usage context, potentially enabling smartphones to proactively adjust interface layouts before users even attempt to reach distant elements. Haptic feedback technology is evolving beyond simple vibrations to include directional force feedback and texture simulation, which could guide users toward reachable interface elements or provide tactile confirmation of successful gesture completion without requiring visual attention. Flexible display technology represents perhaps the most transformative development, with companies like Samsung and LG developing foldable and rollable screens that could physically adapt their size and shape based on usage requirements, essentially solving the one-handed operation challenge through dynamic hardware reconfiguration. Voice control integration is becoming more sophisticated, with AI assistants learning to anticipate user needs and provide proactive suggestions for one-handed operation, while advanced natural language processing could enable complex smartphone control through conversational interfaces that eliminate the need for precise touch targeting. The integration of augmented reality overlays could provide visual guidance for optimal hand positioning and gesture execution, while biometric sensors might automatically detect when users are attempting single-handed operation and adjust interface elements accordingly, creating truly adaptive devices that understand and respond to human ergonomic limitations.