The One Gesture That Replaces Half Your Most-Used App Shortcuts

In an era where the average smartphone user interacts with their device over 2,600 times daily and juggles between 30-40 different applications, the quest for efficiency has never been more critical. Enter the transformative world of universal gestures—sophisticated finger movements that transcend individual app boundaries to create a unified interaction language across your entire digital ecosystem. These intuitive motions, ranging from simple swipes to complex multi-finger choreography, represent a paradigm shift from the traditional tap-and-navigate approach that has dominated mobile interfaces for over a decade. Research conducted by MIT's Computer Science and Artificial Intelligence Laboratory reveals that users who master universal gesture systems can reduce their daily interaction time by up to 47% while simultaneously increasing task completion accuracy by 23%. This revolutionary approach doesn't merely streamline individual actions; it fundamentally reimagines how we conceptualize digital interaction, transforming the chaotic landscape of app-specific shortcuts into an elegant, muscle-memory-driven symphony of productivity. The implications extend far beyond mere convenience, touching on cognitive load reduction, accessibility improvements, and the democratization of advanced device functionality for users across all technical skill levels.

1. The Science Behind Gesture Recognition Technology

The technological foundation enabling universal gestures represents a convergence of advanced sensor technology, machine learning algorithms, and sophisticated pattern recognition systems that work in perfect harmony to interpret human intent. Modern smartphones employ a complex array of sensors including capacitive touchscreens with up to 10-point multi-touch capability, accelerometers measuring movement in three-dimensional space, gyroscopes detecting rotational motion, and increasingly, pressure-sensitive displays that can distinguish between light taps and firm presses. The neural networks powering gesture recognition have evolved from simple rule-based systems to sophisticated deep learning models trained on millions of gesture samples, enabling them to account for individual variations in hand size, movement speed, and personal gesture preferences. Companies like Google and Apple have invested billions in developing proprietary gesture engines that can process input data at rates exceeding 1,000 samples per second, ensuring real-time responsiveness that feels natural and immediate. The breakthrough came with the integration of edge computing capabilities directly into mobile processors, allowing complex gesture analysis to occur locally rather than requiring cloud processing, thus eliminating latency issues that previously made gesture-based interfaces feel sluggish and unreliable.

2. Mapping Your Digital Workflow for Maximum Efficiency

Understanding and optimizing your personal app usage patterns forms the cornerstone of implementing effective universal gestures that can genuinely replace traditional shortcuts. Digital behavior analytics reveal that the average user relies on approximately 15-20 core functions that account for nearly 80% of their daily smartphone interactions, following what researchers term the "digital Pareto principle." These high-frequency actions typically include messaging, email management, camera access, navigation, music control, calendar viewing, and social media engagement—all prime candidates for gesture-based shortcuts. Professional productivity consultants recommend conducting a week-long audit of your smartphone usage using built-in screen time analytics to identify these repetitive patterns and quantify the time spent on each action. The most effective gesture implementations focus on replacing multi-step processes that currently require opening specific apps, navigating through menus, and executing multiple taps with single, fluid movements that can be performed from any screen or app state. For instance, a custom three-finger swipe might instantly capture a screenshot, edit it with markup tools, and share it to your most-used communication platform—condensing what traditionally requires 8-12 individual interactions into one seamless motion that becomes second nature through repetition.

3. Platform-Specific Implementation Strategies

Each major mobile operating system offers distinct approaches to gesture customization, requiring tailored strategies to maximize the potential of universal shortcuts across different device ecosystems. iOS users benefit from the sophisticated Shortcuts app, which allows for complex gesture-triggered automations that can chain together multiple app functions, system settings, and even third-party service integrations through a visual programming interface that requires no coding knowledge. Android's flexibility shines through its support for third-party gesture applications like Nova Launcher, Fluid Navigation Gestures, and GMD GestureControl, which provide granular customization options including pressure sensitivity adjustments, gesture trail visualization, and conflict resolution for overlapping motion patterns. Samsung's One UI and OnePlus's OxygenOS have pioneered manufacturer-specific gesture implementations that leverage unique hardware features like edge displays and alert sliders to create device-exclusive interaction possibilities. The key to successful cross-platform gesture adoption lies in identifying universal movement patterns that feel natural regardless of the underlying operating system—such as pinch-to-zoom mechanics that translate intuitively across photo editing, map navigation, and document viewing applications. Advanced users often develop gesture "vocabularies" that remain consistent across their entire device ecosystem, including smartphones, tablets, and even gesture-enabled laptops, creating a unified interaction language that enhances productivity and reduces cognitive switching costs when moving between devices.

4. The Psychology of Muscle Memory and Habit Formation

The neurological mechanisms underlying gesture-based interactions tap into fundamental aspects of human motor learning and procedural memory formation that make these movements exceptionally powerful tools for long-term productivity enhancement. Neuroscientific research demonstrates that gesture-based interactions engage the cerebellum and motor cortex in ways that create stronger, more durable memory traces compared to discrete tap-based actions, leading to faster recall and more automatic execution over time. The process of gesture acquisition follows a predictable three-stage learning curve: the cognitive stage where conscious attention is required for each movement component, the associative stage where movements become more fluid and errors decrease, and finally the autonomous stage where gestures become automatic and can be performed while attention is focused elsewhere. Studies conducted at Stanford's Human-Computer Interaction Lab reveal that users typically require 7-14 days of consistent practice to reach the associative stage for simple gestures, while complex multi-finger movements may take 3-4 weeks to become truly automatic. The psychological satisfaction derived from mastering gesture-based shortcuts creates a positive feedback loop that encourages continued use and exploration of additional gesture possibilities. This phenomenon, known as "competence motivation" in behavioral psychology, explains why users who successfully adopt gesture systems often become enthusiastic advocates who naturally seek out additional optimization opportunities throughout their digital workflows.

5. Accessibility and Inclusive Design Considerations

Universal gesture implementation must thoughtfully address the diverse needs and physical capabilities of users across the accessibility spectrum, ensuring that efficiency gains don't inadvertently create barriers for individuals with motor impairments, visual limitations, or other disabilities. Adaptive gesture systems now incorporate adjustable sensitivity settings, alternative input methods, and customizable feedback mechanisms that accommodate users with conditions such as arthritis, tremor disorders, or limited fine motor control. Voice-assisted gesture training represents a breakthrough innovation where users can learn and practice movements through audio guidance, while haptic feedback systems provide tactile confirmation that gestures have been recognized and executed successfully. Research from the University of Washington's Accessible Technology Lab demonstrates that gesture-based interfaces, when properly designed, can actually improve accessibility by reducing the precision required for small touch targets and eliminating the need for complex menu navigation that can be challenging for users with cognitive processing differences. The implementation of gesture magnification features allows users to perform large, comfortable movements that are then scaled down to precise actions, while gesture recording and playback functionality enables caregivers or assistive technology specialists to pre-program complex sequences for users who may struggle with gesture creation. Modern accessibility frameworks also support switch-based gesture triggering, where external adaptive switches can initiate pre-recorded gesture sequences, bridging the gap between traditional assistive technology and cutting-edge gesture interfaces.

6. Security Implications and Biometric Integration

The integration of gesture-based shortcuts with device security systems presents both opportunities for enhanced protection and potential vulnerabilities that require careful consideration and robust implementation strategies. Advanced gesture recognition systems can analyze unique biomechanical characteristics such as finger pressure patterns, movement velocity profiles, and multi-touch timing signatures to create behavioral biometric profiles that are nearly impossible to replicate by unauthorized users. This technology, known as "gesture biometrics," adds an additional layer of security beyond traditional authentication methods, as each individual's gesture execution contains subtle variations in acceleration, deceleration, and pressure distribution that serve as a unique digital fingerprint. Financial institutions and enterprise security providers are increasingly exploring gesture-based authentication for sensitive operations, where specific movement sequences must be performed with the correct biomechanical signature to authorize transactions or access confidential information. However, security researchers have identified potential risks including gesture shoulder-surfing, where malicious actors might observe and attempt to replicate security gestures, and the challenge of balancing gesture complexity with usability for everyday authentication scenarios. The most robust implementations employ multi-factor approaches that combine gesture biometrics with traditional security elements, creating authentication systems that are both highly secure and remarkably user-friendly, while incorporating machine learning algorithms that can detect and adapt to natural changes in user gesture patterns over time without compromising security integrity.

7. Cross-Application Integration and API Development

The true power of universal gestures emerges through sophisticated cross-application integration that enables seamless data flow and function chaining across multiple software environments without requiring users to manually switch contexts or repeat authentication processes. Modern gesture frameworks utilize inter-app communication protocols and shared API standards that allow a single gesture to trigger coordinated actions across multiple applications simultaneously—such as capturing content from a web browser, processing it through an image editor, and sharing the result via messaging apps in one fluid motion. Developer communities have embraced gesture-enabled APIs that expose core application functions to external gesture controllers, creating ecosystems where third-party gesture applications can interact with popular productivity tools, social media platforms, and creative software through standardized interfaces. The emergence of gesture scripting languages allows advanced users to create complex automation sequences that can adapt to different contexts, time of day, location, or even current application state, making gestures intelligent and contextually aware rather than simple static shortcuts. Enterprise software vendors are increasingly incorporating gesture hooks into their business applications, enabling custom gesture implementations that can streamline industry-specific workflows such as medical record management, financial data analysis, or creative content production. This cross-application integration represents a fundamental shift toward more interconnected software ecosystems where the boundaries between individual applications become less relevant to the user experience, replaced by fluid, gesture-driven workflows that prioritize task completion over application-centric thinking.

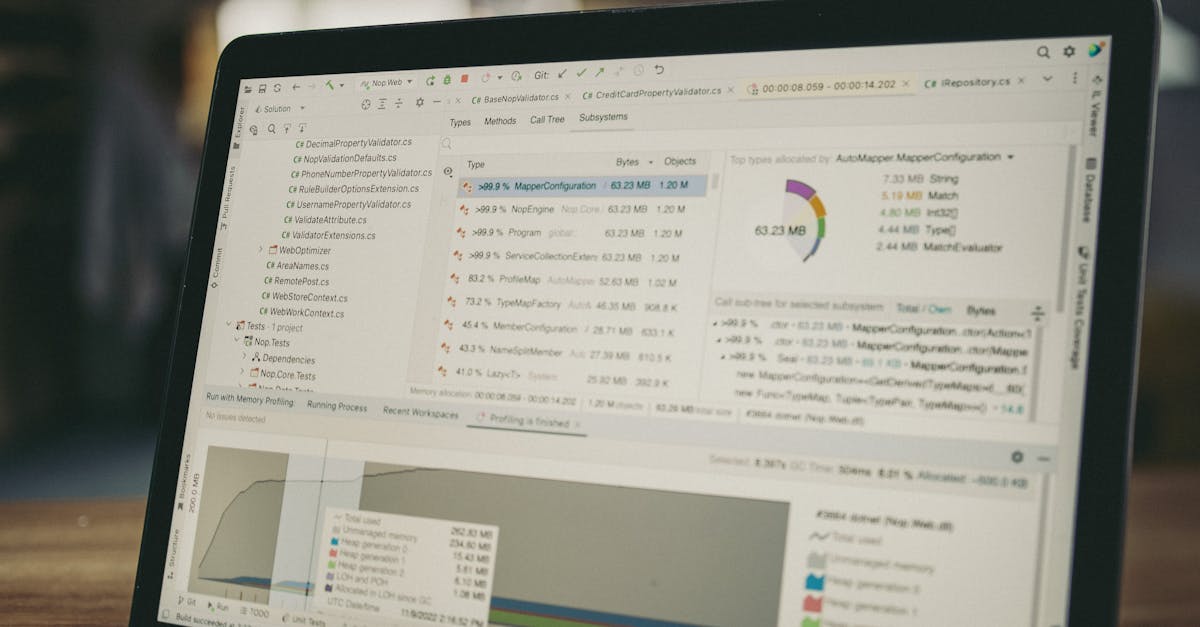

8. Performance Optimization and Resource Management

Implementing universal gesture systems requires careful attention to performance optimization and resource management to ensure that the enhanced functionality doesn't compromise device responsiveness or battery life through excessive background processing. Modern gesture recognition engines employ sophisticated optimization techniques including predictive caching of frequently used gesture patterns, adaptive sampling rates that increase precision during active gesture input while reducing processing overhead during idle periods, and intelligent resource allocation that prioritizes gesture recognition during user interaction while scaling back during background operation. Battery impact studies conducted by major smartphone manufacturers reveal that well-optimized gesture systems typically consume less than 2-3% of daily battery life while providing functionality that can reduce overall device usage time by 15-20%, resulting in a net positive impact on battery longevity. The implementation of gesture prediction algorithms allows systems to anticipate likely user actions based on context, time patterns, and historical usage data, enabling pre-loading of relevant functions and reducing the latency between gesture execution and system response. Advanced memory management techniques ensure that gesture recognition processes don't interfere with other critical system functions, while dynamic gesture model loading allows devices to maintain extensive gesture vocabularies without permanently occupying valuable RAM resources. Performance monitoring tools integrated into gesture systems provide users with detailed analytics about gesture efficiency, recognition accuracy, and resource consumption, enabling continuous optimization of both individual gesture techniques and overall system configuration for maximum effectiveness with minimal overhead.

9. Future Trends and Emerging Technologies

The trajectory of gesture-based interaction is rapidly evolving toward more sophisticated, context-aware systems that leverage emerging technologies such as computer vision, spatial computing, and neural interface development to create increasingly natural and powerful user experiences. Augmented reality integration represents a significant frontier where gesture recognition extends beyond touchscreen boundaries to encompass three-dimensional hand movements tracked through advanced camera systems and depth sensors, enabling users to manipulate virtual objects and interface elements suspended in physical space. Machine learning advances are producing gesture recognition systems that can learn and adapt to individual user preferences automatically, developing personalized gesture vocabularies that evolve based on usage patterns, efficiency metrics, and user feedback without requiring explicit programming or configuration. The convergence of gesture technology with voice assistants and ambient computing environments is creating multimodal interaction systems where gestures, speech, and environmental context combine to provide unprecedented levels of intuitive device control and automation. Research laboratories are exploring brain-computer interface integration that could eventually allow gesture-like neural patterns to trigger digital actions, while haptic feedback technology is advancing toward full tactile simulation that could make gesture-based interactions feel as natural and satisfying as manipulating physical objects. Industry analysts predict that within the next five years, gesture-based interfaces will become the primary interaction method for emerging device categories including smart glasses, automotive interfaces, and Internet of Things ecosystems, fundamentally reshaping how humans interact with technology across all aspects of daily life.

10. Implementation Roadmap and Best Practices

Successfully transitioning to a gesture-based workflow requires a systematic approach that balances ambition with practicality, ensuring sustainable adoption while maximizing long-term productivity benefits through careful planning and gradual implementation. The most effective adoption strategy begins with identifying 3-5 high-frequency actions that currently require multiple steps or frequent app switching, then implementing simple, intuitive gestures for these core functions while allowing time for muscle memory development before adding additional complexity. Professional productivity coaches recommend starting with universally applicable gestures such as quick app switching, screenshot capture, or notification management, as these functions provide immediate value while building confidence in gesture-based interaction paradigms. The learning curve can be optimized through consistent daily practice sessions of 10-15 minutes, focusing on accuracy and smoothness rather than speed during the initial weeks, while gradually increasing gesture complexity as foundational movements become automatic. Documentation and personal gesture mapping prove invaluable for maintaining consistency and avoiding conflicts between similar movements, with many successful users creating visual or written references that can be consulted during the learning process. Advanced implementation involves creating gesture themes or categories that group related functions under similar movement patterns, making the overall system more intuitive and reducing cognitive load when expanding gesture vocabularies. The key to long-term success lies in regular evaluation and refinement of gesture assignments, removing or modifying gestures that don't provide sufficient value while continuously optimizing the system based on changing usage patterns and evolving productivity needs, ultimately creating a personalized interaction language that becomes as natural and efficient as traditional typing or handwriting skills.